WARNING: This is the _old_ Lustre wiki, and it is in the process of being retired. The information found here is all likely to be out of date. Please search the new wiki for more up to date information.

Architecture - Security

Note: The content on this page reflects the state of design of a Lustre feature at a particular point in time and may contain outdated information.

Introduction

This chapter outlines the security architecture for Lustre. Satyanarayanan gives an excellent treatment of the security in a distributed filesystem. Our approach seeks to follow the trail laid out in his discussion, although the implementation choices and details are quite different.

Usability

Only too often have security features led to a serious burden on administrators. Lustre tries to avoid this by using existing API’s as much as possible, particularly in the area of integration with existing user and group databases. Lustre only uses standard Unix user API’s for accessing such data for ordinary users. Special administrative accounts with un-usual privileges, to perform backups for example, require some extra configuration.

Lustre, unlike like AFS and DCE/DFS, does not mandate the use of a particularauthorization engine or user and group database, but are happy to work with what is available. Lustre uses existing user & group databases and is happy to hook into LDAP, Active Directory,NIS, or more specialized databases through the standard NSS database switches. For example, in an environment where a small cluster wishes to use the /etc/passwd and /etc/group files as the basis of authentication and authorization, Lustre can easily be configured to use these files.

We follow in the footsteps of the Samba and NFS v4 projects in using existing ACL structures, avoiding the definitions, development, and maintenance of new access control schemes.

Lustre implements process authorizations groups as they provide more security from root setuid attacks, provided hardened kernels are used.

New features of Lustre are file encryption, careful analysis of cross realm authentication and authorization issues and file I/O authorization.

Taxonomy

The first question facing us is what the threats are. The threats are security violations and Lustre tries to avoid:

- Unauthorized release of information.

- Unauthorized modification of information.

- Denial of resource usage.

The latter topic is only very partly addressed. Alternative taxonomies of violations and threats exist

and include concepts such as suspicion, modification, conservation,

confinement, and initialization.

We refer to Satya’s discussion. The DOD categorization of

security might fit Lustre in at broadly

the C2 level, controlled access protection, which includes auditing.

Layering

On the whole Lustre server software is charged with maintaining persistent copies of data and should largely be trusted. Clients can take countermeasures to avoid too much trust of servers by optionally sending only encrypted file data to the servers. While clients are much less controlled than servers, they carry important obligations for trust. For example, a compromised client might steal users passwords and render strong security useless. The security subsystem has many layers. Our security model, like much else in Lustre, leverages on existing efforts and tries to limit implementation to genuinely new components. The discussion in this chapter uses the following division of responsibilities.

Trust model: When the system activates network interfaces for the purpose of filesystem request processing or when it accepts connections from clients, the interfaces or connections are assigned a GSS-API security interface. Examples of these are Kerberos 5,

LIPKEY, and OPEN.

Authentication: When a user of the Lustre filesystem first identifies herself to the system, credentials for the user need to be established. Based on the credentials,

GSS will establish a security context between the client and server.

Group & user management: Files have owners, group owners, and access control lists which make reference to identities and membership relations for groups and users of the system. Slightly different models apply within the local realm, where user and group id’s can be assumed to have global validity and outside that domain where a different user

and group database would be used on client systems.

Authorization: Before the filesystem grants access to data for consumption or modification, it must do an authorization check based on identity

\and access control lists.

Cross realm usage: When users from one administrative domain requires access to the filesystem in a different domain, a few new problems arise for which

we propose systematic solutions.

File encryption: Lustre uses the encryption and sharing model proposed by the StorageTek secure filesystem, SFS [13], but a variety of refinements and variants

have been proposed.

Auditing: For secure environments, auditing file accesses can be a major deterrent for abuse and invaluable to find perpetrators. Lustre can audit clients, meta-data servers, object storage targets, and access to the key granting services for user credentials and file encryption

keys.

Lustre Networks and Security Policy

Network trust is of particular importance to Lustre to balance the requirements of a high performance filesystem with those of a globally secure filesystem. On the whole Lustre makes few requirements of trust on the network and can handle insecure and secure networks with different policies which will seriously affect performance. The aim is to identify those networks for which cryptographic activities can be avoided, in cases where more trust exists. An initial observation is that there are two extreme cases that should be covered:

Cluster network: Lustre is likely to be used in

compute clusters over networks where:

- Network traffic is private to sender and receiver, i.e. it will not be used by 3rd parties.

- Network traffic is unaltered between sender and receiver.

Other network: On other networks, the trust level is much lower.

No assumptions are made.

We realize that there are a variety of cases different from these two extremes that might merit special treatment. Such special treatment will be left to mechanisms outside Lustre. Examples of special treatment might be to use a VPN to connect a trusted group of client systems to Lustre with relaxed assumptions.

Lustre uses Portals. We will not change the Portals API to include features to address security. Instead, we will use Portals network interfaces to assign GSS security mechanisms to different streams of incoming events. Lustre will associate a security policy with a Lustre network. The policy is one of:

- No security

- GSS security with integrity

- GSS security with encrypted RPC data

- GSS security with data encrypted.

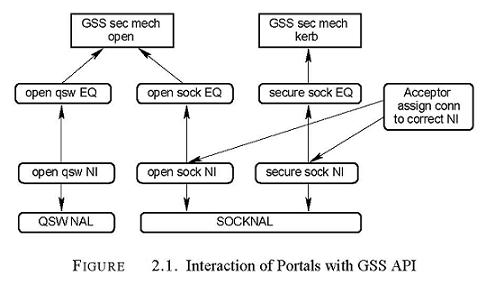

Binding GSS Security to Portals Network Interfaces.

Incoming and outgoing Portals traffic uses an instance of a network abstraction layer (NAL). Such an instance is called a Portals Network Interface. Lustre binds a Portals Network Interface to traffic from a group of network endpoints called Lustre Network, which is identified by a netid. Certain networks are connectionless and it is less easy to intercept the traffic such as UDP, QSW or Myrinet networks. Once Portals binds to the interface, packets may arrive and will face a default security policy associated with the event queue as described above. In the case of TCP/IP, a client (socket file descriptor based) connection cannot be made available to the server subsystems (MDS and OST) until it has been accepted. The accept is handled by a small auxiliary program called the acceptor. The basic acceptor functionality, as shown in figure XXX [need to add] is to accept the socket, determine from what lustre netid the client is connecting, and give the accepted socket to the kernel level Portals NAL. The Portals NAL then starts listening for traffic on the socket, and interacts with the portals library for packet delivery.

To summarize policy selection:

Connectionless networks: The security policy is associated with the interface at startup time, through configuration information passed to the kernel at setup time. If no configuration information is passed, a strong security backend is selected.

Connection-based networks: When the network has connections, the acceptor of connections decides what Portals NI will handle the connection. It thereby affects security decisionsand assign a security policy to a connection.

We invoke the selected security policies before sending traffic or after receiving Portals events for arriving traffic. As shown in figure 2.1 this is easily done by using different Portals network interfaces and event queues. The event queues ultimately trigger the appropriate GSS backend to invoke traffic on an NI. Outgoing traffic is handled similarly at the level of a connectionless network interface. For TCP connections are made in the kernel for outgoing traffice and the kernel needs awareness of what security policy applies to a certain network. At present this is a configuration option, if the need arises it could be negotiated with the acceptor on the server.

GSS Authentication & Trust Model

The critical question is to discuss what enforces security in Lustre and what is security enforcement? Lustre uses the GSS-API as a model for authentication and integrity of network traffic. Through the GSS API we can be sure that messages sent to server systems originate from users with proven identities, according to a GSS security policy installed at startup or connection acceptance time. The different levels of security arise from different GSS security backends. On trusted networks we need ones which are very efficient to avoid disturbing the high performance characteristics of the filesystem, but we also need to be prepared to run over insecure networks.

The GSS-API

The GSS-API provides for 3 important security features. Each of these mechanisms is used in a particular security context which the API establishes:

- The acquisition of credentials with a limited lifetime based on a principal or service name.

- Message integrity.

- Message privacy.

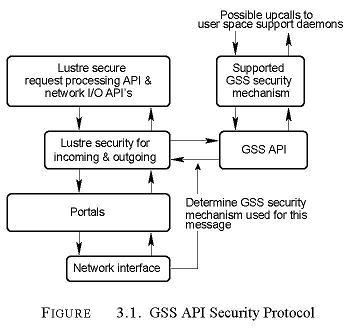

In order to do so, the GSSAPI is linked to a security mechanism.

At present the GSSAPI offers

the Kerberos 5 security mechanism as a backend. Typically

the GSS-API is used as middle ware

between the request processing layer and a backend security

mechanism, as illustrated in figure 3.1.

For Lustre to use the GSS-API the following steps have been taken:

- Locate or build a kernel level implementation of the GSS-API with support for the TriLabsrequired Kerberos 5 security mechanism. This can be obtained from the NFS v4 project.0-copy properties of our networking API’s cannot be preserved with that implementation,and changes have to be made to avoid the use of XDR and SUN RPC.

- Modify the Lustre request processing and network I/O API’s to make use of the GSS API to provide their services. This will be original work requiring a fairly detailed design specification for peer scrutiny. The resulting API’s will be similar to those provided by the RPCSEC_GSS-secure RPC API used in NFS v4. They will include various pieces of data returned by the GSS calls in the network packets.

Removing credentials

The kdestroy command will remove Kerberos credentials from the user level GSS daemon. However, we also need to provide a mechanism to flush the kernel cache of credentials. If this is not handled by the user level GSS daemon an lustre-unlog (ala kunlog for AFS) should be built.

Special cases

There are a few special connections that need to be maintained. The most important one is the family of MDS -OSS connections. The OSS should accept such connections and the MDS should have a permanently installed mechanism to provide GSS credentails to the authentication mechanisms. The OSS will treat the MDS principal as priviliged, just like some other utilities like backup, data migration and HSM software.

Process Identity and Authentication

Credentials should be acquired on the basis of a group of processes that can reasonably be expected to originate from the same authenticated principal. If that process group is determined by the user id of the process vulnerabilities can arise when unauthorized users can assume this uid.

One of the most critical security flaws of NFS is that a root user can setuid to any user and acquire the identity of this user for NFS authorization. In NFS v4 this is still the case -except that the uid for which su is performed, should have valid credentails.

The process authentication groups introduced by AFS can partly address this issue, however, it is only provides true protection on clients with hardened kernel software that make it difficult for the root user to change kernel memory. SELinux provides such capabilities. Without such, the extra security offered by PAGs is superficial and should not be provided.

PAGs may also help if processes under a single uid on a workstation arising from network logins may not be authenticated as a group. In environments where workstations provide strong authentication there may be no need for this, but pags can provide effective protection here.

Process Authentication Groups

Unix authorizes processes based on their uid -the uid defines a partition of the set of processes. Many distributed filesystems find this division of the processes too coarse to give effective protection; such systems introduce smaller Process Authentication Groups (PAG’s). A group of client processes can be tagged with a PAG. PAG’s are organized to give processes that truly originate from a single authentication event the same PAG and all other processes a different PAG. This can separate processes into different PAG’s even if the user id of the process is the same and it can bundle processes together that run under different user id’s into the same PAG.

Properties of a PAG

The smaller group of processes for which authentication should give access is called a PAG, defined by the following:

- Every process should belong to a PAG.

- PAG’s are inherited by fork.

- At boot time, init has a zero PAG.

- When a process executes a login-related operation (preferably through a PAM module), this login process would execute a "newpag" system call which places the process in a new PAG.

- Any process can execute newpag and thereby leave an authentication group of which it was a member.

Implementation

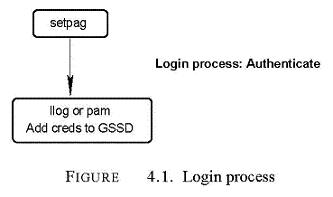

Lustre could implement a PAG as a 64 bit number associated with

a process. Login operations will execute a setpag operation.

A Pluggable Authentication Module (PAM) associated with kinit and login procedures, or the llog

program, can establish GSSAPI supported credentials with

a user level GSS daemon during or after

login. It is as this point that the PAG for

these credentials should be well defined.

When the filesystem attempts to execute a filesystem operation for a PAG for which credentials are not yet known to the kernel, an upcall could be made to the GSS daemon to fetch credentials for the PAG. The Lustre system maintains a cache of security contexts hashed by PAG. A GSSAPI authentication handshake will provide credentials to the meta-data server and establish a security context for the session; this is illustrated in figure 4.2.

Once the identity of the PAG has been established, both the client and the server will have user identities and group memberships associated with that identity. How those are handled will be discussed in the next section. Before authentication has taken place, a process only gets the credentials of the anonymous user.

Alternatives

AFS implementation

Design Note: The Andrew project used PAG’s for AFS authentication. They were "hacked" in the sense that they used 2 fields in the groups array. Root can fairly easily change fields in the group array on some systems, but apart from that this implementation avoided changing the kernel.

The Andrew project called the system interface call "setpag", which was executed in terms of getgroups and setgroups.

PAG and authentication and authorization data

Probably a better way to proceed is to assign a data structure with security context and allow all processes in the same PAG to point to it and take a reference on the data structure. This authorization data would have room to store a list of credentials for use on different filesets and security operations. newpag will be a simple system call decreasing the refcount on the current PAG of a process and allocating a new one. We could use /proc/pags to hold a list of PAG’s.

The user and Group Databases

Lustre uses standard (default) user and group databases and interfaces to these databases, so that either enterprise scale LDAP NIS active directories can be queried or local /etc/passwd /etc/group databases can be used.

Users and groups appear fundamentally in two forms to the filesystem:

- As identities of processes executing filesystem calls.

- As user and group owners of files, thereby influencing authorization.

Lustre assumes that within an administrative domain the results of querying for a user or group name or id will give consistent results. Lustre also assumes that some special groups and users are created in the authentication databases for use by the filesystem. These address the needs to deal with administrative users and to handle unknown remote users.

The user and group databases enter into the filesystem-related API’s in just a few places:

Client authorization: The client filesystem will check group membership and identity of a process against the content of an ACL to enforce protection.

Server authorization: The server performs another authorization check. The server assumes the identity and group membership of client processes as determined by the security context. It sets the values of file and group owners before creation of new objects.

Client filesystem utilities: Utilities like ls require a means to translate user id’s to names and query the user databases in the process. The filesystem has knowledge of the realm from which the inodes were obtained, but the system call interface provides no means totransfer this information to user level utilities.

As we will explain below when covering cross realm situations there is a fundamental mismatch between the two uses and the UNIX API’s. Lustre’s solution is presented in the next section.

Lustre security descriptor and the Current Protection Sub Domain

The fundamental question is ’Can agent X perform operation Y on object Z?’. The protection domain is the collection of agents for which such a question can be asked. In Lustre, the protection domain consists of:

- Users and groups.

- Client, MDS, and OST systems.

For a particular user a current protection subdomain (CPSD) exists, which is the collection of all agents the user is a member of. This is shown in figure 5.1.

UNIX systems introduce a standard protection domain based on what the UNIX group membership and user identity are. These are obtained from the /etc/passwd and /etc/group files, or their network analogues through the NSS switch model. The UNIX task structure can embed this CPSD information in the task structure of a running process. A user process running with root permissions can use the set(fs)uid, set(fs)gid, and setgroups system calls, to change the CPSD information.

Things are more involved for a kernel level server system, to which a user has authenticated over the network. In that case, the kernel has to reach out to user space to fetch the membership information and cache it in the kernel to have knowledge of the CPSD. Such caches may need to be refreshed if the principal changes it’s uid and is authorized to do so by the server systems. Lustre servers hold CPSD attributes associated with a principal in the Lustre Security Descriptor (LSD).

Basic handling of users and groups in Lustre

When a clients performs an authentication rpc with the server, the server will built a security descriptor for the principal. The security descriptor is obtained by an upcall. The upcall uses standard Unix API’s to determine:

- the uid and principal group id associated with the username obtained from the principals name

- the group membership of this uid

This information is held and cached, with limited life time, in kerel server memory in the LSD structure associated with the security context for the principal. Other information that will be held in the LSD is information applicable in non-standard situations.

- The uid and principal group id of the principal on the client. If the client is not a local client with the same group and user database this is used as described in the next section

- Special server resident attributes of the principal for example:

(a) Is the principal elegible for the server to respect setuid/setgid/setgroups information supplied by the client (these will only be honoured if the file system has an appropriate attribute also).

(b) Which group/uid values will be respected may be set?

(c) Is this principal able to access inodes by file identifier only (without a random cookie). This is needed for Lustre raid repair and certain client cache synchronizations.

(d) Should this principal get decryption keys for the files even when identies and ACL’s would not provide these.

(e) Should this principal be able to restore backups (e.g. allow it to place encrypted files into the file system)

Handling setuid, setgid and setgroups

There are several alternative ways in which these issues can be handled in the context of network security for a file system.

Priviliged principals

A daemon offering GSS authenticated services can sometimes perform credential forwarding. Kerberos provides a way to forward credentials. This can provide excellent NFS v4 Lustre integration. This mechanism is external to Lustre.

When the service authenticates a user it can hand its credentials to the user level GSS daemon, which can use them to re-authenticate for furhter services. Therefore if Lustre requires a credential for a server process that has properly forwarded the credentials to the GSS daemon, it can transparently authenticate for this. Note that in this case the Lustre credential should be associated with the user id and the PAG (or optionally just with the user id).

If the server is a user level server, the setuid/setgid/setgroups calls can be intercepted to change the security descriptor associated with the process, in order for its credentials to be refreshed. If this is done the threat of root setuid, discussed above, is also eliminated.

Forwarding credentials

When an unmodified non GSS server is running on a Lustre client exporting file system information, there may be no facility for the server system to have access to credentials for the user. At the mimimum the principal would have to log in to the server system and provide authentication information, then the PAG system would have to be bypassed to allow the server to obtain the users credentials to become available to the servers PAG. AFS has recognized this a serious usability issue.

In order to not render Lustre unusable in this environment, a server resident capability can be associated with the triple: client, file system and principal. This capability will allow the client to forward user id, group id and setgroup arrays.

Extreme prejudice is required and by default no client, file system and principal has this capability.

Cross Realm Authentication and Authorization

In global filesystems such as Lustre, filesets can be imported from different realms. The authentication problems associated with this are suitably solved by systems such as Kerberos.

A fundamental problem arises from the clash of the user id / group id namespaces used in the different realms. These problems are present in different forms on clients, where remote user id’s need to be translated to sensible names in the absence of an UNIX API to do so. On servers adding a remote principal to an access control list or assigning ownership of a file object to a remote principal, the creation of a user id associated with that principal is required.

Lustre will address both problems transparently to users through the creation of local accounts. It will also have fileset options to not translate remote user id’s, translate them lazily, or translate them synchronously to accommodate various use patterns of the filesystem.

The Fundamental Problem in Cross Realm Authorization

File ownership in UNIX is in terms of uid’s and gid’s. File ownership on UNIX in a cross realm environment has two fundamental issues:

- Clients need to find a textual representation of a user id.

- Servers need to store a uid as owner of an inode, even when they only have realm and remote user id available.

Utility invocation such as ls -l file issues a stat call to the kernel to retrieve the owner and user, and then use the C-library to issue a getpwent call to retrieve the textual representation of the user id.

The problem with this is that while the Lustre filesystem may have knowledge that the user name should be retrieved from a user database in a remote realm, the UNIX API has no mechanism to transfer this information to the application.

This is in contrast with the Windows API where files and users are identified by SID’s which lie in a much larger namespace and which are endowed with a lookup function that can cross Windows domains (the function name to do so is lookupaccountsid).

When the filesystem spans multiple administrative domains, the Unix API’s are not suitable to correctly identify a user.

A server cannot really make a remote user an owner/group owner of a file nor can it make ACL entries for such users, unless it can represent the remote user correctly in terms of the available user and group databases.

Lustre handling of remote user id’s

When a connection is made to a Lustre metadata server the key question that arises is:

- Is the user / group database on the client identical to that on the server?

We call such a client local with respect to the servers. Lustre makes that decision as follows:

- The acceptor, used to accept TCP clients has a list of local networks. Clients initiating connection from a local network will be marked as local.

- There is a per fileset default that can overrides when the tcp decision is not present. This decision may not be present when clients on other networks connect.

Each lustre system, client and server, should have an account nllu “Non local Lustre user” installed. On the client this is made known to the file system as a mount option, on the server it is a similar startup option, part of the configuration log. On the client it is important that there is a name associated with the nllu user id, to make listings look attractive.

When the client connects and authenticates a user, it presents the client’s uid of this user to the server. The client uid also presents kerberos identity of the user to the server, and this is used by the server to establish the server uid of the principal. For each client the server has a list of authenticated principals.

When the server handles a non-local client, it proceeds as follows for each uid that the server wants to transfer to the client or vice versa:

- If uid is handled by the server, and it is among the list of authenticated user id’s translate it.

- All other uids are translated to the server or clients nllu user id.

Limited manupilation of access control lists on non-local clients

In order to provide an interface to ACL’s from non local clients, group and user names must be given as text, for processing on the server. Lustre’s lfs command will provide an interface to list and set ACL’s. However, the normal system calls to change ACL’s are not available for remote manipulation of ACL’s.

Solutions in Other Filesystems

AFS: We believe there is no work around for the getpwent issues in the AFS client filesystem.

The Andrew filesystem has a work-around for the fundamental problem on the server side. When users gain privileges in remote cells that require them to appear as owners of files or in access control lists, the cklog program can be used and creates an entry in the FileSystem Protection DataBase (FSPDB) recording user id’s and group membership of the remote realm. The file server can now set owner, group owner, and ACL entries for the remote user correctly.

This creation is required only once, but allows the remote cell to treat a cross-realm user in an identical fashion as a local user. For details, see the AFS documentation [12].

Windows: The Windows filesystem stores user identities in a much larger field than a 32 bit integer and the fundamental problem does not exist in Windows. The Win32 function lookupaccountsid maps a security id to full information about the user, including the domain from where the sid originated. File owners are stored as sid’s on the disk.

NFS v4: This filesystem appears not to explicitly address this problem. NFS v4 transfers the file and group owners of inodes to the clients in terms of a string. On the whole this is a bad idea for scalability, as it forces the server to make numerous lookups of such names from userid’s, even when such data is not necessarily going to be used.

If it is desirable to give clients textual information about users, they should probably interact with the user databases themselves to avoid generating a server bottleneck.

MDS Authorization: Access Control Lists

Our desire is to implement authorization through access control lists. The lists must give Linux Lustre users POSIX ACL semantics. Given that we handle cross realm users through the creation of local accounts for those users, we can rely on the POSIX ACL mechanisms. Lustre will use existing ACL mechanisms available in the Linux kernel and filesystems to authorize access.

This is the same mechanism used by Samba and NFSv4.

Good but not perfect compatibility has been established between CIFS, NFSv4, and POSIX ACL’s. The subtle semantic differences between Windows, NFSv4, and POSIX ACL’s can be further refined by adding such ACL handling to the filesystems supported by Lustre.

A secondary and separate “access” control list may be added to filesets that have enabled file encryption. This ACL will be handled separately after the POSIX ACL has granted access to the inode.

Fid guessing

During pathname traversal the client goes to the parent on the MDS, going through ACL’s mode bits etc, to get its lookup authorized. When complete, the client is given the FID of the object it is looking for. If permisions on a parent of the fid change, a client may not be able to repeat this directory traversal. A well behaved client will drop the cached fid it obtained when it sees permission changes on any parents. To do so it uses the directory cache on the client.

A fid guessing attack consists of a rogue client re-using a fid previously obtained or obtained through guessing in order to start pathname traversal halfway through a pathname, at the location of the guessed fid. Protecting against access to MDS inodes through "fid guessing" is important in the case of restrictive permissions on a parent, and less strict permissions underneath.

To prevent this, the Lustre MDS generates a capability during lookup which allows the fid to be re-used for a short time upon presentation of the capability. Any fid based operation would fail unless the fid cookie is provided. This limites the exposure to rogue clients to a short interval, of which users should preferrably be aware.

Alternatives

NFS has made file handles "obscure" to achieve the same.

Implementation Details

A fundamental observation about access control lists is that typically there are a few access control lists per file owner, but thousands of files and directories with that owner. As a result it is not efficient, though widespread practice, to store a copy of the ACL’s with each inode.

The Ext3 filesystem has implemented ACL’s with an indirection scheme. We leverage that scheme on the server, but not yet on the client.

Auditing

Lustre uses a filter layer called smfs which can intercept all events happening in a filesystem and on OST’s.

Auditing happens on all systems. Auditing on clients is necessary to record access to cached information which only the client filesystem can intercept at reasonable granularity; operations that result in RPC’s are not cached for efficiency reasons. On the MDS systems, audit logs are perhaps the most important since they contain the first point of access to the file and directories. On the OSS’s a summary audit log can be written, with a reference to the entry on the MDS that needs to be looked at in conjunction with this. For this the objects on the OSS carry a copy of the FID of the MDS inode.

Lustre will send this information to the syslog daemon. The granularity of the information logged will be tunable. A tool is available to combine the information obtained from servers and clients and to scan for anomalies.

A critical piece of information that needs to be logged on the OSS is the full file identitier of the MDS inode beloning with an object. Moreover, file inodes on the MDS should contain a pointer to parent directories to produce traceable pathnames.

Alternatives

Such mechanisms are described in Howard Gobioff’s thesis [XXX] section 4.4.3.

SFS Style Encryption of File Data

The StorageTek SFS filesystem provides a very interesting way to store file data encrypted on disks, while enabling sharing of the data between organizations. SFS is briefly described in [13] and [14]. In this subsection, we review some of the SFS design a proposed integration with Lustre. We also provide a more light weight cryptographic file system capability that is much easier to implement.

Encrypted File Data

In SFS, file data can be encrypted. Each file has a unique random key, which is created at the time the file is created. It is stored with the file, but it is encrypted and a third party agent, called the group server must be access to provide the unencrypted file key. The key never changes, and remains attached with the file until the inode of the file is freed.

The file is encrypted with a technique called countermode, see [15], section 2.4.5. Countermode is a simple mechanism to encrypt an arbitrary extent in a file without overhead related to the offset at which the extent is located.

Ultimately this cryptographic information leads to a bit stream wich is used to x-or’d with the file data. Patches probably exist for Linux kernels to introduce counter mode encryption of files relatively easily.

Creating a New File

An information producer creates a new file and can define who can share this file. At the time of file creation the file is encrypted with a random key, and an access control list for the file is generated, granting access to the file. The group server is involved for two reasons:

- It encrypts the file key with a group server key.

- It signs the access control list, including the key, so that its integrity remains known.

The encrypted file key and the signed access control list are stored with the file.

The SFS Access Control List

SFS defines an access control list, which is perhaps

an unfortunate term because it is more a sharing control description.

We call the SFS access control

list the SFS control list.

The SFS control list contains identity descriptors

which contain a name of a group (confusingly

called a project in the SFS literature) and the file key

encrypted with a public key of a group server.

Once an application has access to the inode,

it can scan the SFS control list and present an identity

to a group server, which then returns the key to the file.

This description, taken from the SFS papers

fails to address the issue of integrity of the ACL

for which some measures must be taken.

A variety of more complicated identities can be added to the SFS control list. Escrow can be defined by entries that state that any K of N identities must be presented to the group server before the key will be released. There is also a mechanism for an identity to be recursive with respect to group servers and require more than one group server to decrypt before the key is presented.

In principle anyone who can modify the SFS control list of the file can add further entries defining groups managed by other group servers, by encrypting such entities with the public key of the group server, provided the group server permits this operation.

The Group Services

The user, or the filesystem on behalf of the user, presents an identity found in the access control list and the user credentials to a group server. Group server checks that the user is a member of the group and returns the un-encrypted key to the filesystem to allow it to decrypt the file. The group server can build an audit trail of access to files.

The group server must be trusted since it can generate keys to all files that have an ACL entry encrypted with the public key of the group server.

The group acts a bit like a KDC, but it distributes file keys, not session keys.

Some aspects of the group service are the subject of a patent application filed by StorageTek.

Weaknesses Noted

Counter mode encryption: This technique has some weaknesses, called malleability, but adding mixing can fix this. Mixing algorithms are worked on but will be patented. [see Rogaway as UCSC.]

Access control: The SFS access control lists have, at least theoretically, a weakness. While it is debatable if the system actually gives the key to a user, once the key has been given out to a user the user may retain access to the file data permanently. For a database file which remains in existence permanently, this is not an optimal situation.

Ordinary access control lists need to supplement the authorization. This will prevent unauthorized access to the file. However, a user with a key remains a more risky individual with respect to theft of the encrypted data.

Lustre SFS

Lustre provides hooks for a client node to invoke the services of the group key service as proposed by SFS. The SFS access control list will be stored in an extended attribute, in addition to normal ACL’s discussed above. A key feature of this group server is that principals can manipulate the database, in contrast with system group databases, which usually allow only root to make any modifications.

Lustre also implements a simpler encryption scheme where the group key service runs in the MDS nodes. This scheme uses the normal ACL with an extended attribute to store the encrypted file encryption key. The MDS has access to the group server key, and provides the client with the unencrypted key after authorization for file read or write succeeds, based on the normal POSIX ACL. Lustre also has a server option on principals that allow decryption on certain client nodes, regardless of the ACL contents. It is recommend that the acquisition of credentials for such operations follows extremely secure authentication, such as multiple principals using specially crafted frontends to the GSS security daemons.

Controlling encryption

An MDS target can have a setting to have none, all or part of the files encrypted. When part of the files is encrypted, the user lfs can mark a directory subtree for encryption.

Encrypting Directory Operations

Encrypting directory data is a major challenge for filesystems. It appears possible to use a scheme like the SFS scheme to encrypt directory names. MDS directory inodes can hold an encrypted data encryption key that is used to encrypt & decrypt each entry in the directory.

Clients encrypt names so that the server can perform lookup on encrypted entries. The client receives encrypted directory entries and for directorly listings, the client performs decryption of the content of the directory.

File I/O authorization

Capabilities to access objects

The clients request the OSS to perform create/read/write/truncate/delete operations on objects. Truncate can probably be treated as write, particularly because Lustre already has append only inode flags to protect files from truncation. The goal is to efficiently authorize these operations, securily. This section contains the design for this functionality.

When a client wishes to perform an operation on an object it has to present a capability. The capability is prepared by the MDS when a file is opened and sent to a client. Properties of the capabilities are:

- They are signed with a key shared by the MDS and OSS

- Possibly specify a version of an object for which the capability is valid

- They specify the fid for which objects may be accessed

- They specify what operations are authorized.

- A validity time period is specified, assuming coarsely synchronous clocks between the MDS and OSS.

- The kerberos principal for which the capability is specified is included in the capability.

Network is secure/insecure

If the network is secure, capabilities cannot cannot be snooped off the wire so no network encryption is needed. However, normally capabilities have to be transmitted in an encrypted form between the MDS and the client and between the client and the OSS to avoid stealing the capability off the wire.

GSS can be used for that. If GSS authenticates each user to the OSS a particularly strong scenario is reached.

Multiple principals

If a single client perform I/O for multiple users, the client Lustre software establishes capabilities for each principal through MDS open. Ultimately the I/O hinges on a single capability still being valid.

Revocability and trusted software on client

If a malicious user is detected, all OSS’s can refuse access through a “blacklist”. This leads to immediate revocability.

If client software is trusted, clients will discard cached capabilities associated with files when permissions change, for example. Cached capabilities only exist if cache of open file handles is used. If software on clients cannot be trusted, a client may regain access to the file data as long as his credentials are valid.

This could be refined by immediately expiring capabilities on the OSS’s, by propagating an object version number to the OSS’s and including it in the capability. This would slow down setattr operations, but increase security.

Corner cases

Cache flushes

Cache flushes can happend after a file is closed. If file inode capability cookies are replicated to objects, this can lead to problems, because a cache flush could encounter a -EWRONGCOOKIE error, but no open file handle is available to re-authorize the I/O. If cookies are replicated, when the file is closed the data needs to be flushed, postponing closes has proven to be very hard.

Replay of open after recovery

If files saw permission reduction changes while open, replay of open involves trusting clients to replay honestly, or including a signed capability to the client to replay open with pre-authorized access on the MDS. At present Lustre checks permission on replay again, so open replay may not be transparent and may cause client eviction.

Client open file cache

With the client open cache reauthorization after the initial open is possible but somewhat pointless. If the client software cannot be trusted data could be shared between processes on the client anyway. Lustre uses the client to re-authorize opens from the open cache.

Write back cache

With the write back cache, a client should be authorized to create inodes with objects and set initial cookies on the objects it creates. For the master OSS where the objects will finally go, such authorization should involve an MDS granted capability, for the cache OSS, the client can manage security.

Pools

Pools have a security parameter attached to them to authorize clients in a certain network to perform read, modify, create, delete operations on objects on a certain OST. This authorization is done as part of file open, create and unlink. The MDS will not grant capabilities to perform operations on objects not allowed by the pool descriptor.

Odds and Ends

Recovery and the security server

The security server provides GSS/kerberos (or other GSS services) and networked user/group database services to Lustre. This is 3rd party software and Lustre has not planned modifications to it to become failover proof. The following details the situation further:

The software will consists of:

- LDAP services, and here the client is the C library queries to that database partly through PAM modules and utilities.

- kerberos KDC, the client is the client and server GSS daemons and kerberos utilities like kinit, their library equivalents and PAM modules

The server parts of 1,2 can easily be made redundant as standard IP services. For 1 & 2, client server protocol failure recovery would consist of retries and transaction recovery code for the services. This recovery, for the protocols in 1 & 2, would be completely outside the scope of Lustre. It just _may_ exist already, but I doubt these protocols have good retry capabilities.

However, if Lustre components, MDS, OSS and clients fail and recover they will re-use these services appropriately to recover. In some cases Lustre’s retry mechanisms may, by coincidence invoke appropriate rety on protocol 1 & 2.

Renewing Session Keys

Long running jobs need to renew their session keys. Lustre will contain sufficient error handling to refresh credentials from the user level GSS daemons transparently.

Portability to Less Powerful Operating Systems

When Lustre is running as a library on a system which may not have access to IP services, some restrictions in the security model are required. For example a GSS security backend running on a service node operating the job dispatcher should supply a context that can be used by all client systems. [XXX: Is this the Red Storm model? It does not fit BG/L.]

Every effort will be made to implement a single security infrastructure and treat such special cases as policies.

Summary Tables

| attribute | structure system | containing data structure | notes | |

| pag | client | current process & user level GSS daemon | a number belonging to a process group, authenticated process groups | |

| ignore pag | client | super block | do not use pags, but use uid’s to get | |

| client remote | client | super block | treat this client as one in a remote domain | |

| gss context | client/MDS/OSS | associated with a principal and service export | a list of GSS supplied contexts associated with the export/import on servers/clients | |

| LSD | MDS | associated with a principal and service export |

describes attributes and policies for a particular principal using a file system target. | |

| security policy handler | MDS/OSS | lustre network interface |

methods describing the server security in effect on this network | |

| root squash | MDS | MDS target descriptor | root identity is mapped one-way on the server | |

| taget allow setid | MDS | MDS target descriptor | grant certain client/principals setid capability | |

| modify cookie | MDS | MDS target descriptor | when this flag is set,

mode bits or owners will cause the version to be modified on MDS and OSS’s associated inodes. | |

| principal allow setid | MDS | LSD | allow principal setid | |

| principal setids | MDS | LSD | which id values can be set by a principal ANY is a permitted value) | |

| client allow setid | MDS | LSD | when a principal is found that has setid

the client list is given to the MDS | |

| local of remote domain | MDS | lustre connection |

is this export to a local or remote client, given to the kernel by the acceptor upon connect, or else set as a configured per server default associated with the network. A client may override and request to be remote during connection. | |

| client uid-gid/server uid-gid | MDS | LSD | client and server uids/gids associated with the security context, used for remote clients | |

| Lustre unknown uid/gid | client/MDS | superblock/LDS | unknown user id to be used with this principal

when translating server inode owners/client owner/groups, used only with remote clients. | |

| groups array | MDS | LSD | server cached group membership array | |

| mds directory inode cookie | MDS | audit mask | random number to authorize fid based access to MDS inodes | |

| inode crypt key | MDS | mds inodes | random file data encruption key,encrypted with the group server key | |

| parent fid | MDS | mds inodes | the fid of a parent inode of a file inode,for

pathname reconstruction in audit logs | |

| file inode cookies | MDS/OSS | MDS inodes/oss objects | random numbers enabling authorization of I/O operations | |

| file crypt master key | LGKS | lkgs memory |

key to decrypt file encryption keys | |

| MDS audit mask | MDS | target smfs filter file system | mask to describe what should be logged | |

| Client audit mask | client filter | super block of filger | mask to describe what should be logged | |

| OSS audit mask | target smfs filter file system |

Data structures and variables

Client configuration options

- remote lustre user id:: mount option rluid=<int>

- remote lustre group id:: mount option rlgid=<int>

- client is remote:: mount option remote

- don’t use pag:: mount option nopag

MDS configuration options

| feature | description |

| network secure | GSS|Integrity|Encrypt> |

| principal db: |

allow setid <principal> <allowed setuids> <allowed setgids> <allowed targets> <allowed clients> |

| allow decrypt <principal> <allowed targets> <allowed clients> | |

| allow fid access <principal> <allowed targets> <allowed clients> | |

| target security parameters | no root squash <allowed targets> < allowed clients> |

| pool security parameters | m|r|u> |

| audit | audit <audit options> <what target> <what clients> |

| encrypt | none|partial> <what target> |

| file encryption master key | <key> |

What client descriptors are lists of netid and lists of netid,nid,pid triples.

OSS configuration options

The OSS needs a principal DB to grant the MDS and certain administrative users raw object access without cookies.

- princpal db: allow raw <principal> <allowed targets> <allowed clients/mds>

Extended attributes

| What | System | data structure | description |

| dir cookie | MDS | MDS dir inode | random 64 number to avoid fid guessing attack |

| file read cookie | MDS/OSS | MDS file inodes & objects | Part of the capability to authorize I/O |

| crypt key | MDS | encrypted MDS inodes | encrypted inode encryption key |

| parent inode pointer | MDS | all MDS inodes | pointer to an directory inode and MDS containing this inode |

| crypt subtree | MDS | MDS directory inodes | all file inodes under this directory should be encrypted |

Changelog

Version 4.0 (Sep 2005) Peter J. Braam, update for security deliverable.

Version 3.0 (Aug 2003) Peter J. Braam, rewritten after security CDR

Version 2.0 (Dec. 2002) P.D. Innes -updated figures and text, added Changelog

Version 1.0 P. Braam -original draft