WARNING: This is the _old_ Lustre wiki, and it is in the process of being retired. The information found here is all likely to be out of date. Please search the new wiki for more up to date information.

Architecture - GNS

Note: The content on this page reflects the state of design of a Lustre feature at a particular point in time and may contain outdated information.

Global Namespaces for Filesystems

A global namespace for a distributed filesystem provides a globally valid single directory tree to all clients of the filesystem. Typically the tree contains folder collections, also known as filesets or volumes, from many different servers which are grafted into the namespace. An important characteristic of such trees is that they are self-describing, i.e. they require little or no configuration information on the clients. We describe how global namespaces require mount-point objects stored in the file system. We address the path name traversal of mount-point objects and compare approaches from AFS/DCE DFS and Coda to automounting filesystems such as autofs, which are based on directory information. Two further cases which require special attention are persistent, filesystem-based caches for filesets on clients and their relation to the global namespaces. Finally, when global filesystems are accessed on the servers where some of the filesets are stored in local filesystems, their interaction with the namespace is of particular interest. We describe how InterMezzo and Lustre use a Linux namespace module implemented by the author to provide the required features.

Acknowledgement: Lee Ward participated heavily in discussions leading to the approach described here.

Introduction

A global namespace for a filesystem unifies the directory trees available from multiple file servers. This paper describes a global namespace system which overcomes some difficulties with existing systems. The intent is to eliminate configuration data on clients and to provide a directory tree which is valid from every workstation in the installation and remains valid when configurations are updated.

Global namespaces were perhaps introduced first in the Andrew FileSystem (AFS) and have since been used in many different forms in other environments. In AFS, the global namespace provides access to filesets (called volumes in AFS lingo) and typically each user has a unique fileset as a home directory as well as possible filesets associated with projects. An AFS namespace can unify 10,000’s of filesets, distributed over different administrative AFS domains called cells. The traversal of a fileset mount-point takes place within a Unix mounted AFS filesystem. AFS does not rely on configuration data on the client to traverse mount-points, but contacts a server to obtain fileset location data. Coda, DCE/DFS, and Microsoft DFS use similar mechanisms.

Another mechanism for providing global namespaces is provided by the automounter family (amd and autofs). These provide magical file systems that detect the traversal of mount-points. When the traversal takes place, a mount map is consulted and a Unix mount is executed to bring the new filesystem into the directory tree. Mount maps can be held in configuration files or stored in NIS or LDAP directory services. The automounter offers global namespaces for heterogeneous filesystems, e.g. it can combine NFS and local filesystem mounts. The initial amd automount daemons were special NFS servers, but with Solaris 2.6, SUN introduced an autofs filesystem and an advanced automount daemon.

The purpose of this paper is to describe a global namespace design that is slightly more general. It combines the advantages of the AFS system with the newer features offered by the automounter.

The implementation details for global namespaces are in fact somewhat involved. We indicate a solution somewhat different from existing implementations and provide a roadmap incorporation of the global namespaces in Linux environments building on local filesystems, NFS, InterMezzo, and Lustre.

Acknowledgment: This paper was written with support from Tacitus Systems, Inc.

Fundamental Definitions and Requirements

Unix filesystems can be mounted on directories. There are no special requirements on directories acting as mount-points; they can be empty, non-empty, and can have any name. The mount command parses the command line options or /etc/fstab file and issues a system call which provides the kernel with the device, filesystem driver, and other parameters required to mount a filesystem on a directory. The root directory of the filesystem covers the mount-point directory when the mount has taken place. In the kernel the virtual filesystem is responsible for handling the traversal of mount-points. From this we see that (1) there is no mount-object in a Unix filesystem, they are described in /etc/fstab, and (2) there is no automatic mounting on directories when mount-points are entered.

Mounting filesystems is done by covering the so-called mount-point with the root directory of the new filesystem. Inside the VFS a special mechanism, typically a vfs_mount structure, is used to link the covered mount-point directory to the root directory of the mounted filesystem. It is important to note that typically the name of the root of a filesystem is inherited from the parent filesystem, while the inode belongs to the mounted filesystem. Special handling of mount-points happens, for example, when .. is encountered in a pathname, at a filesystem root.

The Andrew FileSystem contains mount-objects which it represents as symbolic links whose data contains the mounting information. While one can argue that a separate object to represent mountpoints might be more natural, there are significant advantages in making mount-objects ordinary filesystem objects. Such objects can be created, modified, and removed easily without changes to system software, even when the filesystem is accessed through NFS or SMB servers. While AFS, Coda, and DCE/DFS store mount-points in symbolic links, they nevertheless manipulate such objects with a special ioctl on the filesystem.

The simplest implementation of a global namespace would introduce mount-objects that carry the name of the mount-point and mount filesets or filesystems on these objects when a lookup operation on the object is performed. This turns out to be inefficient. In many cases a lookup is only performed for getting the attributes of the object. If a directory such as home were to contain thousands of mount-objects for users home directories, a mount storm would ensue. This is a deficiency of the AFS system. As we will see, recent versions of autofs have overcome this problem.

In order to build flexible global namespaces the following requirements appear reasonable:

Fileset and filesystem support: The global namespace should be able to provide a global namespace which includes Unix filesystem mount-points and fileset mount-points. The ability to mount filesets and filesystems in multiple locations is desirable.

Local and networked configuration data: Configuration data should be fetched from network servers, but the system should also be able to use locally stored data.

Activation, caching, and releasing: Mount-points should be cached objects which can be released after having been idle. Retry storms after failures and cluster state changes must be avoided by limiting the retry frequency. Invalidations of mount-points should be possible on a timeout or callback basis. Mounting a mount-object should not be triggered merely by lookups of the mount-point itself.

Mount-objects: Mount-objects should preserve full Unix semantics, i.e. they are directories and contribute to the link count of the parent directories. Invisible mount-objects,such as indirect mount maps, are to be avoided. Traversal of symbolic links to reach the roots of filesets and filesystems is to be avoided. Handling of mount-objects in a filesystem should be a mount option for that filesystem. Preferably, mount-objects should be ordinary filesystem objects which can be created without special administrative tools.

Mount-object manipulation: Mount-objects should be filesystem objects that can be created through standard API’s.

Authorization: Mounted mount-objects should be protected by their access control list or another filesystem protection mechanism. The process of mounting a mount-object should require authentication and authorization, using the identity and authentication of the process which triggers the mount.

Update handling: Modifications to mount-objects should trigger events within a reasonable amount of time on all systems that have the mount-object.

The Andrew FileSystem and Autofs

In this section we will review the functionality of the AFS fileset handling and the transient features of autofs. Both the autofs/automounter and the AFS global namespaces provide important insights and mechanisms which we exploit in our system.

Autofs is a filesystem which transparently mounts filesystems. There are implementations for Linux and Solaris (and likely for other systems), and the Solaris implementation is at present more fully featured. A good overview of this system is found in [17].

Autofs is a filesystem that contains mount-objects. It intercepts lookup calls on directories and interacts with a user level automount daemon to automatically mount filesystems. Earlier versions of autofs intercepted the VFS issued lookup calls for the mount-point itself. Prior to the return of the correct inode to the VFS layer, autofs requested the automount daemon to mount the directory. The automount daemon uses configuration files or network databases then locates the mount information associated with the directory name which autofs presents. Next it creates the directory under the autofs mount-point, if it doesn’t exist yet, and executes the mount command for that directory. Now it returns control to autofs, which gives the mount-point inode to the VFS. Autofs can be used to build global namespaces by incorporating symbolic links into the filesystem which point to directories under an autofs mount-point. Upon traversal such symbolic links transparently lead into a mounted filesystem. Such situations are referred to as indirect maps and are suitable for mounting, for example, a family of home directories. Autofs can also be attached to a single existing directory, which it mounts upon traversal. Autofs is capable of caching mounted filesystems, and releasing filesystems that have not been used for a given amount of time.

In recent versions, several refinements were made that are important. First, in the case of indirect maps, autofs can execute a readdir operation, based on the entries in the mount map. In this way, directory listings appear normally, while in early versions only the already mounted entries would appear. Early versions of autofs mounted the filesystem when the mount-point was looked up. This gave rise to mount storms in common situations (such as doing ls -l under an autofs mounted directory). This was solved by only invoking the mount when a lookup is done for an entry in the mounted directory, or when the mounted directory is opened with an opendir system call. Finally, autofs gained support for dealing with trees of mount-points and also performing multiple mounts in parallel.

Autofs still has a few shortcomings:

- The autofs solution introduces a separate filesystem type, which has to be mounted for mount-objects to become available.

- The objects that need mounting under autofs are not self-describing but, instead, need mount maps.

AFS filesets, also known as volumes or folder collections, are distinguished subsets of the Unix mounted AFS filesystem. A fileset has a root directory and contains a standard directory tree, augmented with mount-point objects. Some limitations are present when AFS filesystem operations traverse filesets: hard links cannot span multiple filesets and rename operations can only work within filesets. The mount-point objects are represented as symbolic links which point to names that are recognized by AFS as an identifier of another fileset. When such objects are traversed, AFS contacts a Volume Location DataBase (VLDB) and finds the server of the fileset in question. The root directory of the fileset undergoes a lookup operation and the new fileset is grafted into the namespace. To avoid administrative difficulties, operations involving mount-objects, such as creation and removal, have to be done using ioctl commands issued by a filesystem utility. Coda and DCE/DFS filesets are handled quite similarly.

A few shortcomings of the AFS mount-objects are:

- AFS cannot graft other filesystems into its namespace.

- AFS mount-objects are subject to (fileset) mount storms.

- AFS mount-objects undergo complicated transitions from symbolic links to directories at mount time and then back again at unmount.

- The link count of the parent directory of mount-points is frequently not equal to the number of subdirectories plus two.

We will discuss the requirements for global namespaces in more detail, heading for a system that combines some of the benefits of the AFS and autofs solutions.

Implementing and Handling Mount-Objects

The mount-objects stored in the filesystem provide the glue to traverse from one fileset into another and from one filesystem into another inside a global namespace. When the objects are entered, which we define to be an opendir or lookup operation inside the directory, the namespace implementation must turn the mount-object into a local mount, proper. The local mount continues to be monitored by an automount daemon.

We will break with the AFS tradition (now also in use in Microsoft’s DFS) to use symbolic links to represent mount-objects by exposing them as directories instead. By using directories, we avoid the problems of having to change object types when mount-objects become mounted and we correctly maintain the link count of the directory containing a mount-object.

As the global namespace handling involves overhead, the direct and simple representation of mount-objects as a directory is not particularly efficient: there are many directories in typical filesystems and usually directories will not represent a mount-object. The mount-object, cum directory, must carry extra information within it’s own attribute set which is not commonly used, and provides a hint that the object might be a mount-object.

We will use directories which have the setuid mode bit set (which is not interpreted by the kernel) and store the mount information in a file named mntinfo inside such a directory. The file contains sufficient information for a user level mount daemon to perform a full mount of the fileset or filesystem. As mount directives we propose that the format of this file be:

- For filesets: fileset://fileset_name[@cell.domain.name][/[...sub-directory]]

- For filesystems: unix://mount command arguments

In either case, it is important that the effects of mount options can be exploited in two ways:

- Making them part of the mntinfo file.

- Making them part of site-specific default options for particular workstations.

For instance, in the case of user generated mount-objects, honoring the setuid bit when present on an executable file from an untrusted host is probably not desired.

An implementation should not casually attempt to reuse successful mounts for two distinct mountobjects. Credentials of the process driving the operations may be different or the two mount-objects may request dissimilar options.

In order to activate mount-object handling, the filesystem containing the mount-objects needs a mount option which indicates that mount-objects must be honored. One implementation of such an option is to mount the filesystem under a global namespace filter driver. The filter driver can install new filesystem methods that intercept those operations that are related to global namespaces. Alternatively, the VFS can be modified to contain mount-object handling, but we prefer to impose no or minimal changes on the VFS for portability.

If we rely on the VFS to intercept the mount-objects we would mount a filesystem with:

mount -o mountobjs,otheropts,otherflags device directory

If one uses a filesystem filter, the mount command which would activate mount-object handling in a filesystem would look like:

mount -t gnsfs -o bottom_fs=somefs,otheropts device directory

The following summarizes the transient features of the mount-object handling:

- Detect mount-objects: The filtering layer’s lookup operation should call lookup in the filtered filesystem and install special handling if the inode found is a directory with setuid mode bit set.

- Lookup and opendir for mount-object: The mount-object itself should have a new lookup and opendir method, both of which should trigger a mount before handing over to the root directory of the newly mounted filesystem.

- lookup and opendir: This should make sure the filtered lookup and opendir methods for the newly mounted filesystem get executed when the automounter completes its work.

Before the mount can be executed, the filesystem will have to read the mntinfo file in the mount-object. This is passed to the automount daemon to process the mount command. Also passed is the identity of the user triggering the mount-event. The mount commands executed by the mount daemon should allow for considerable flexibility to allow for the case of filesets, namespace bindings, and simpler mounts. Also important is that, for example in NFS, the mount commands should be the fully enabled mount commands which can establish connections to servers.

We will use the infrastructure found in autofs to keep track of how long mount-objects have been unused and mounted, and unmount them appropriately. - VFS fileset mount support: Fileset mounts should be arranged without a new superblock for each mount. This may require support from the VFS as these are not namespace bindings,

but submounts that introduce a fileset root inode into the directory tree.

An important distinction between this solution and the AFS fileset mounting is that this system can handle mount-objects in any filesystem. For example, it is possible to graft a local /tmp filesystem into an NFS tree and have a global /tmp/global directory NFS mounted underneath. A distinction between the autofs solution and this system is that the mount-objects are part of the underlying filesystem, not part of an autofs filesystem. As well, no map files are needed to identify the mountobjects and no external configuration data is needed to drive the process.

A final issue is that of invalidations of cache mount-point objects. Invalidations arising from modifications of the server directory shown under a mount-point are part of the standard update propagation from servers to clients, available in a clustered infrastructure. It is necessary to intercept such updates, even when a filesystem or fileset is mounted on top of the mount-object; this might well require some special filesystem methods.

The delicate issue here is what to do if the mount-object is changed. Once Unix processes start executing filesystem operations in a mounted fileset or filesystem, it is not possible to unmount that fileset. There are two proposals on how to handle mount-object updates:

- The filesystem or fileset is only unmounted when it is not busy.

- An aggressive solution could turn all inodes below a mount-point into bad inodes. This will likely lead to processes releasing the inodes sooner. The new information in the mntinfo file under the mount-object should be used to mount the new fileset or filesystem as soon as possible.

Namespace Bindings

The mechanisms outlined in the previous section show how to graft filesets and filesystem mountpoints into a global namespace for a filesystem. We must still discuss what is needed to mount filesets and filesystems at multiple points. On file servers and on clients with persistent caches, different problems surface. The key concept to solving these issues is a binding in a namespace.

Linux 2.4 saw the introduction of the –bind option on the mount command. It allows a directory mounted in a filesystem to be re-mounted in another location. The implementation is straightforward but subtle:

- The dentry defining the filesystem name must be that of the mount-point.

- The inode defining the root directory must be the root of the namespace that is bound to the new location.

Normally dentries point to their inodes, and at the junctions in the namespace provided by bindings a vfs mount data structure is used to provide the namespace traversal. This data structure links the mount-point and the root of the new tree.

Using bindings, mounting filesets and filesystems at multiple points is not a problem.

The server considerations are best treated with an example. Consider an NFS file server augmented with lookup operations to support mount-objects. NFS exports can be made part of a global namespace using the mount-objects. On the file server for an export it is possible to mount the global namespace containing the NFS fileset, but on this server it is less optimal than using the local filesystem containing the NFS export. There are two issues that require attention.

First, we need OS support to mount the root of the NFS export into the global namespace. Linux 2.4 provides support for this with the –bind option on the mount command.

Second, it is important that the mount-objects stored in the NFS export receive different treatment when traversed within the global namespace than in the NFS export, where they are merely directories. This is solved by using the global namespace option at mount time. Without the option, mount-objects are passed through and remain simple directories, to be interpreted on the client as appropriate. With the option set, the file server has the opportunity to perform the mount and thus expose the fileset tree to NFS clients that cannot or should not do the job themselves.

On filesystem clients with persistent caches, similar considerations apply. The caches belonging to different filesets are most easily maintained in their own trees, but require visibility inside the global namespace too. These problems are solved in an analogous manner, outlined below.

The Fileset Location Database and the Automounter

The Linux autofs infrastructure provides the necessary enhancements to handle mount events triggered by lookup and opendir operations on mount-objects. When the corresponding requests reach the automount daemon the following should happen:

The daemon locates the necessary fileset information, based on the information found in the mntinfo file. The fileset mount information will in some cases be obtained from the server and may contain more detailed information than what is found in the mntinfo file. For example, a system may wish to have the flexibility to store the identity of the server for a fileset in a networked database and not in the mntinfo file. In other cases the information found in mntinfo may be sufficient for the automount daemon to directly execute its command.

The daemon locates and applies pertinent local information, allowing the system administrator to preclude access to other hosts or networks and to limit or override the user specified mount options.

In the case where the automounter needs to find information on a server, this mechanism could be implemented as an executable autofs/automount map. We expect to support LDAP-based mount maps in this fashion.

The daemon next executes the mount command using appropriate credentials. The credentials used may come from the process driving the operation, optional local information, or, in an advanced implementation, from directory meta-data when and where supported.

Expiries and invalidations are initiated by the daemon but performed by the kernel, through ioctls, as in autofs.

The Global Namespace of the InterMezzo Filesystem

InterMezzo is a distributed filesystem with a global namespace. InterMezzo wraps around disk filesystems which it uses both as server-based persistent storage and as a persistent cache on the client. InterMezzo refers to such storage space as a cache. Caches can contain full replicas of filesets, as happens on a server of a fileset, or can contain partial caches of filesets, typical in the case of clients in installations with a large amount of servers. The global namespace solution for InterMezzo needs to address three issues:

- Combine filesets from multiple servers into a single directory tree for clients, by exploiting mount-points.

- On an InterMezzo server for a fileset, combine the global namespace of filesets from other servers with the locally stored filesets. Avoid installing an unnecessary cache for the filesets located on this server.

- If a system is a client for a fileset, provide a directory tree for that fileset in the global namespace, as well as a directory tree outside of the global namespace which is not subject to automatic mounting of mount-objects.

InterMezzo in fact provides further flexibility by exporting multiple namespaces.

As alluded to above, there are two filesystem layouts of an InterMezzo cache. We refer to the layout provided by the disk filesystem as the physical layout and to that provided by the global namespace mechanism as the logical layout.

The physical layout is normally only used by InterMezzo daemons, such as cache purging daemons, but is in principle accessible to normal users of the filesystem as well. In the physical layout, the mount-points are not covered and receive updates normally. Updates to these objects should trigger messages to the mount daemon to adjust mount situations accordingly, using the filter mechanisms.

InterMezzo uses the namespace bindings discussed earlier to provide the physical and logical views of the cache. We’ll now show how the mechanisms discussed are used to provide a global namespace on clients. Figures 7.1 & 7.2 show the layout of the physical and logical namespaces, as well as the mount commands issued to achieve these views of the filesystem.

The following output from the mount command shows how the /bind/ option on the Linux 2.4 mount command has been used to build this namespace:

- $> mount

- ....

- /dev/sda1 on /cache type intermezzo (rw)

- /cache/cell1/fset1/ROOT on /izo type none (rw,bind)

- /cache/cell1/fset2/ROOT on /izo/dir/fset2-mtpt type none(rw,bind)

- /cache/cell2/fset1/ROOT on /izo/dir/fset1@cell2-mtpt type none(rw,bind)

On servers we face a somewhat different situation. Servers provide filesets from other servers, which are usually partial caches, but have InterMezzo caches which are full replicas for locally stored filesets. For example, consider the server of fset1@cell2 in figure 7.1.

The output from the mount command shows the mount arrangement:

- $> mount

- /dev/sda1 on /cache type intermezzo (rw)

- /dev/sda2 on /cache2 type intermezzo (rw)

- /cache/cell1/fset1/ROOT on /izo type none (rw,bind)

- /cache2/cell2/fset1/ROOT on /izo/dir/fset1@cell2-mtpt type none (rw,bind)

- /cache/cell1/fset2/ROOT on /izo/dir/fset1@cell2-mtpt/dirdir/fset2@cell1-mtpt :type none (rw,bind)

Note that the bindings now combine physical fileset namespaces from more than one cache into the global namespace found under /izo.

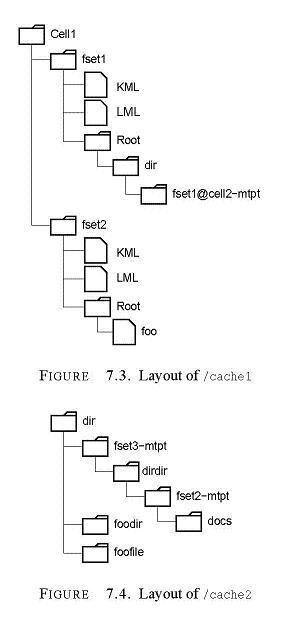

The caches now have a different layout. There are two caches, one for the locally stored /fset1@cell2/ fileset, mounted on /cache2 (figure 7.4), and a partial cache for the imported filesets, mounted on /cache1 (figure 7.3).

We believe that this provides all the flexibility InterMezzo filesets require.

Our mechanisms also allow the mix of different filesystem types. In InterMezzo installations where the root filesystem is of type InterMezzo, it can be attractive to have a /tmp directory backed by

storage on a local disk. A subdirectory /tmp/global might be very useful to provide globally visible temporary files. The following mount commands show how this can be accomplished.

Mount-objects in Lustre

Describe here that Lustre has filesets XXX. Explain that traversing a mount-object may involve a query to LDAP to locate the meta-data server for a fileset.

Implementation

We have begun implementation of a filtering global namespace mechanism. XXX describe the implementation here.

Changelog

Version 3.0 (Dec. 2002)

- (1) P.D. Innes - updated and resized figures, added Changelog

Version 2.0 (Nov. 2002)

- (1) P.D. Innes - updated text

Version 1.0

- (1) L. Ward - original draft